Nine Years Building a Master Data Management and Data Quality Platform – Here’s What We Learned

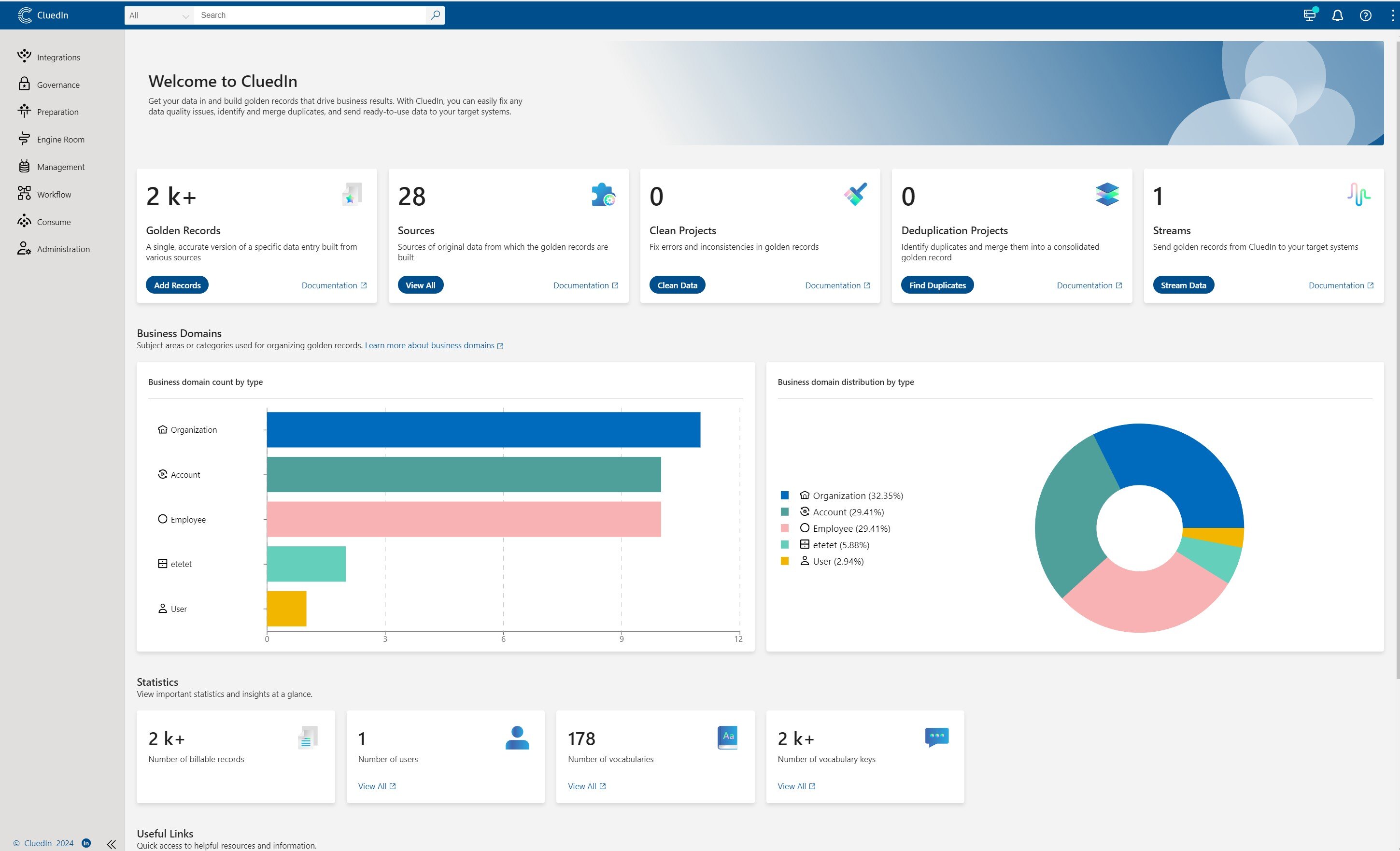

Over the last nine years, our journey at CluedIn has been dedicated to mastering data management and data quality. We’ve encountered countless challenges, learned from our customers, and seen first-hand the transformative potential of good data. So, what have we learned along the way? Here are the top insights we’ve gained from nearly a decade of building a platform designed to meet the real needs of modern businesses.