Article

How to Fix Inconsistent Master Data Between ERP and CRM Systems

The most effective long-term solution is to implement Master Data Management (MDM) to maintain a persistent, governed golden record across systems.

Quick answer: Fix inconsistent master data between ERP and CRM by defining an authoritative source per data domain, cleansing duplicates, creating explicit mapping and transformation rules, implementing conflict resolution and validation, selecting the right integration architecture, and monitoring continuously with governance and automation.

Define scope and ownership: agree which domains matter most (customers, products, pricing, orders) and assign an authoritative source per domain.

Audit current data: profile ERP and CRM for completeness, duplicates, and conflicting identifiers.

Cleanse and standardize: deduplicate, normalize formats, and align key IDs before syncing.

Map and transform: document field mappings and transformation rules (split/merge, 1-to-many).

Select architecture: choose ETL/ELT/API/iPaaS/bi-directional sync based on latency and complexity.

Resolve conflicts: implement precedence rules, validation, and deduplication to prevent sync corruption.

Monitor and govern: add logging, alerts, KPIs, data stewardship, and continuous improvement loops.

Inconsistent master data occurs when ERP and CRM systems store conflicting, duplicate, or outdated versions of the same core business entities (customers, products, pricing, orders). The result is operational friction: incorrect pricing, duplicate outreach, delayed orders, and reporting discrepancies.

What is data decay? Data decay (also called data rot) is the gradual degradation of data quality over time due to unsynchronized systems, manual errors, format inconsistencies, and lack of governance, leading to duplicates, outdated records, and increased operational risk.

An authoritative source is the system responsible for maintaining the most accurate and trusted version of a specific data domain. Without defined ownership, bi-directional integrations create conflict loops and “overwrite wars.”

Map the domains where inconsistencies cause the most pain:

| Data domain | Common authoritative system | Why |

|---|---|---|

| Product names, codes, descriptions | ERP | ERP typically owns product catalog + fulfillment context |

| Pricing and inventory levels | ERP | Operational truth for stock + pricing rules |

| Customer relationship attributes | CRM | CRM captures sales engagement + relationship context |

| Sales activities and pipeline | CRM | Commercial ownership and velocity |

| Orders and fulfillment status | ERP | Execution system of record |

Data owners: who approves changes per domain

Override rules: when CRM can override ERP (or vice versa)

Exceptions: regional variations, legacy migrations, acquisitions

Stewardship workflow: how disputes are escalated and resolved

Internal context:

You cannot synchronize what you do not trust. Start by profiling both ERP and CRM to surface the current state of master data quality.

Internal context:

Data mapping is the explicit definition of how fields and structures in ERP correspond to fields in CRM, including transformation logic needed to keep meaning consistent.

| ERP structure/field | CRM structure/field | Transformation rule |

|---|---|---|

| Party / Account / Site / Site Use | Account | Define 1-to-many mapping and “primary site” logic |

| FullName | FirstName + LastName | Split using rules; handle edge cases (multi-part surnames) |

| AddressLine1..n | Street / City / Region / PostalCode | Normalize and validate; standardize country/region formats |

| ItemCode / MaterialID | ProductCode | Enforce uniqueness; block invalid formats at ingestion |

Rule of thumb: If mapping isn’t documented, it doesn’t exist. And if it doesn’t exist, your integration will drift.

The best integration approach depends on two variables: latency requirements and transformation complexity.

| Pattern | Typical latency | Best for | Watch-outs |

|---|---|---|---|

| Batch ETL | Minutes to days | Heavy transformation, legacy systems | Staleness between runs; conflict handling often weak |

| ELT (cloud-native) | Minutes to hours | Cloud data platforms, analytics | Operational systems still need mastering and validation |

| API-driven sync | Near real-time | Operational workflows | Requires strong validation + retries + idempotency |

| Bi-directional sync | Near real-time | Shared ownership scenarios | High conflict risk without precedence + coordination |

| iPaaS | Varies | Connector management at scale | “Bi-directional” often means two one-way jobs |

Conflict resolution is predefined logic that determines which version of a record should prevail when ERP and CRM updates disagree.

Field-level validation: enforce required formats and values (not just record-level checks)

Precedence rules: system priority per domain (ERP wins product; CRM wins engagement), plus field-level overrides

Deduplication: prevent duplicates from propagating (match on email/domain/ID + fuzzy similarity)

Change coordination: avoid “ping-pong updates” when both systems modify the same record

Error handling: retries, dead-letter queues, exception alerts, reconciliation jobs

Hard truth: Bi-directional sync without conflict logic is not integration, it’s automated corruption.

Continuous improvement means regularly reviewing, testing, and enhancing synchronization processes to adapt to system upgrades, business changes, and new data sources—so data does not decay over time.

Data governance is the set of processes, roles, and standards that ensure enterprise data remains accurate, secure, compliant, and fit for purpose across its lifecycle.

Traditional ERP–CRM projects treat this as a synchronization problem: “move records between systems.” Modern enterprises treat it as a mastering problem: “maintain a governed golden record that systems consume.”

Internal context:

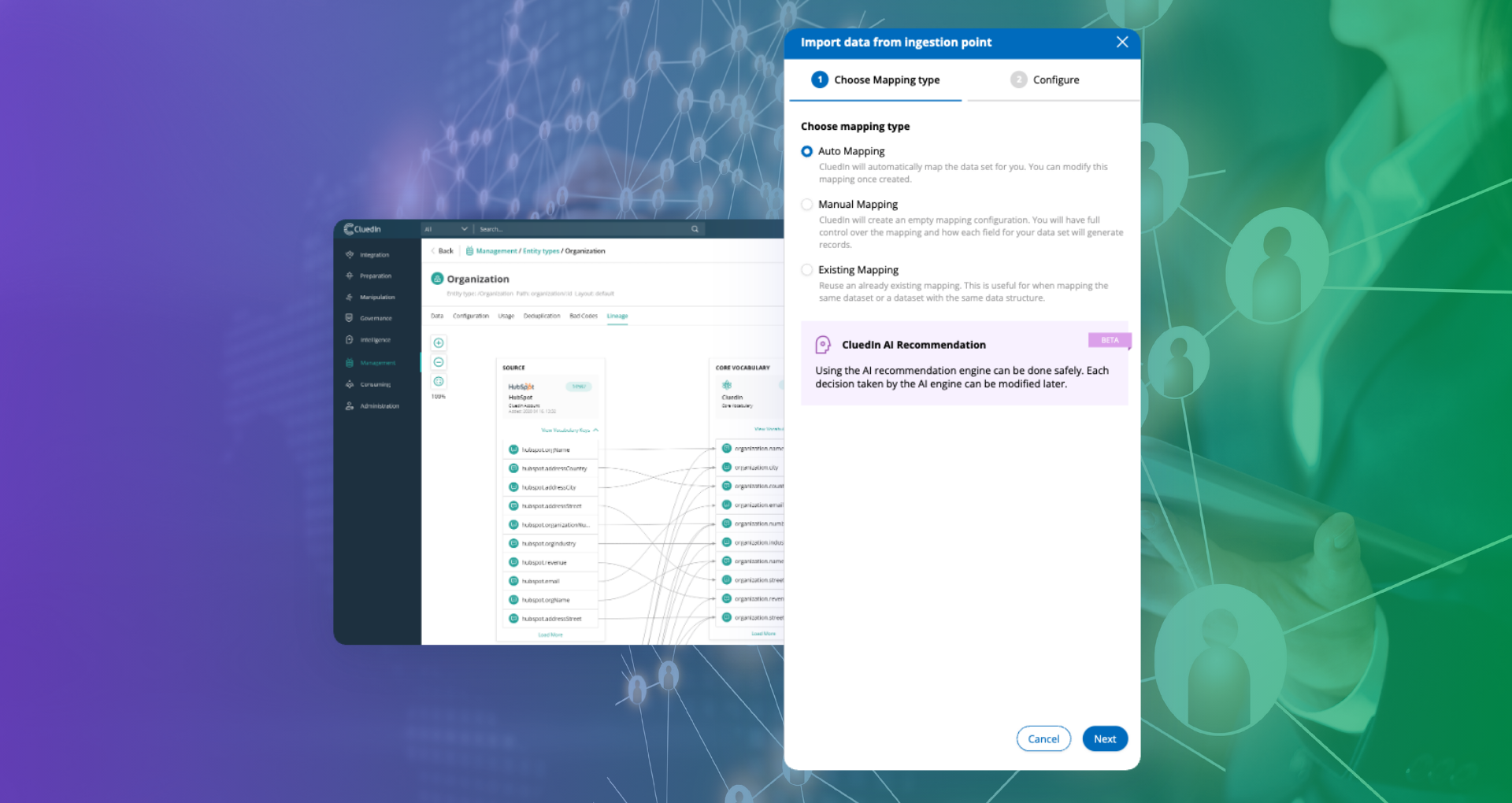

CluedIn is a modern, graph-native Master Data Management platform designed for continuous data quality improvement at enterprise scale.

Persistent knowledge graph: master data exists as a connected, queryable graph, not isolated tables.

Agentic automation: autonomous AI agents continuously detect drift, duplicates, and inconsistencies.

Entity resolution: matching and mastering across ERP and CRM happens continuously, not quarterly.

Context-aware conflict handling: rules and policies are applied at field and domain level, with exceptions surfaced.

Governance built-in: ownership and policy enforcement are operational, not aspirational.

Integration-ready: publish mastered data back to ERP/CRM and into modern ecosystems (including Microsoft Fabric patterns).

Explore CluedIn:

Inconsistent master data is typically caused by unclear system ownership (no authoritative source), poor or undocumented field mapping, schema and format differences, one-way or unreliable synchronization, missing conflict-resolution rules, and weak ongoing governance. These gaps lead to duplicates, conflicting values, and records drifting out of sync over time.

Fix inconsistent master data by defining an authoritative source for each domain, auditing and cleansing existing records, creating explicit mapping and transformation rules, implementing conflict resolution and validation, selecting an integration pattern that fits latency and complexity needs, and monitoring continuously with governance and automated quality checks.

Effective synchronization requires clear source-of-truth rules, documented mappings, and conflict logic. Use batch ETL/ELT when heavy transformation is needed, API-driven flows for near-real-time operational requirements, and bi-directional sync only with coordinated change detection, validation, and precedence rules to prevent conflict loops.

MDM resolves ERP–CRM inconsistencies by establishing a governed master record (golden record) for core entities like customers and products. It continuously reconciles changes, deduplicates records, applies standards and ownership rules, and publishes trusted master data back to ERP and CRM to reduce conflicts and manual remediation.

Improve data mapping by defining field-level correspondence, standardizing identifiers, documenting transformation rules (split/merge, one-to-many mappings), and validating formats and required fields at ingestion. Pair mapping with deduplication rules (deterministic and probabilistic matching) so duplicate entities are detected before they propagate.

Use real-time integration when operational decisions depend on immediate consistency (e.g., inventory, pricing, order status). Use batch processing for analytics or high-volume transfers where latency is acceptable and transformations are heavy. Many enterprises use a hybrid: real-time for operational sync and batch for downstream reporting.

Prevent data decay by continuously validating and monitoring key master data domains, enforcing governance and stewardship, applying automated deduplication and anomaly detection, and implementing a mastering layer that reconciles changes across systems rather than relying on periodic cleanups. Measure drift using KPIs like duplicate rate, completeness, and sync failure frequency.